Building AI Agents to Gather and Structure Data

Problem

A start-up preparing to launch a national marketplace and directory for small independent providers faced a critical pre-launch challenge: its platform needed to be populated with rich, detailed provider information before it could go live — but that information didn't exist in any single place.

Provider data was scattered across websites, Facebook pages, and a range of other online sources. Additionally, each provider presented their information differently, with no consistent structure or format. Aggregating it at the scale required, within a viable timeframe, was not something that could be done manually.

Solution

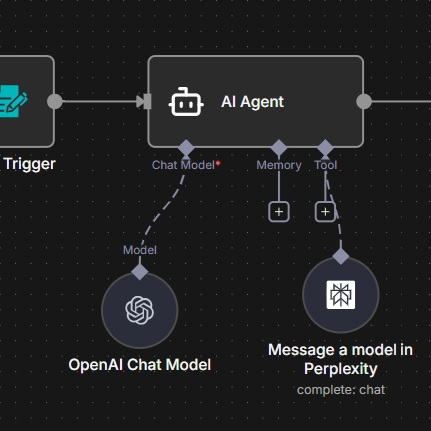

To solve this, I designed and built an agentic workflow using N8N. The start-up was given a simple interface through which they could select a target region of the UK and specify the type of service providers they wanted to find. From there, the process ran automatically.

The AI agent — powered by OpenAI — interpreted the request and used Perplexity to search the web, gathering relevant provider information from across the internet and generating concise, AI-written summaries of each service. That raw output was then passed back through OpenAI for a second processing stage, which cleaned, validated, and structured the data against a predefined schema.

The agent then converted the structured data into JavaScript formatted specifically to enable insertion into the database — removing any need for manual data entry or transformation.

Once in the database, a location-based API was manually triggered to resolve each provider's address into precise latitude and longitude coordinates, enabling interactive maps to be integrated directly into provider profile pages.

Result

As expected, the agent required an iterative process of training and evals before it performed reliably, but the core capability proved out well — correctly identifying and mapping the right information to the right fields at scale.

Some limitations remain. Certain fields are occasionally left unpopulated, and where a provider appears in multiple locations across the web, their information can surface more than once in the output. Both issues have clear solutions.

The next phase of development will introduce one or more additional agents into the workflow, specifically to address these gaps. One element will focus on deduplication, removing duplicate provider entries before they reach the database, and another will apply an alternative AI model to identify and fill any blank fields, leveraging the unique strengths of differing AI models where the first has failed.